How to help kids outsmart social media algorithms

Social media companies can shape how kids think, feel, and behave online by influencing the content they see. Every video watched (or skipped), post liked, or comment made sends a signal to an algorithm, teaching it what to show next. Over time, this can lead to endless scrolling, targeted ads, and content that could be misleading, or even harmful.

The good news is that kids and parents can take steps to stay in control. By understanding how algorithms work, families can help children enjoy the benefits of social media while avoiding its common negative effects. From recognizing how engagement influences feeds to learning simple strategies for diversifying content, this guide shows practical ways to outsmart the algorithm.

ExpressVPN is launching this campaign to equip families with the tools they need to navigate digital spaces more confidently, including clear explanations of algorithms and strategies for mindful online habits. With awareness and a few simple steps, children can use social media in a safer way.

Understanding the algorithm

This guide covers everything you need to know about what the algorithm is and how it works. Understanding it can help your child successfully recognize when their feed becomes too repetitive or unhealthy.

What do we mean by ‘“the algorithm”?

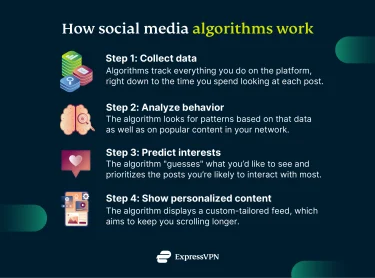

An algorithm is a set of mathematical rules that computers use to solve a problem or make decisions. In the context of social media, the algorithm determines what type of content appears on users' feeds and in what order, relying heavily on engagement signals like likes, comments, saves, and shares.

In other words, every time your child interacts with a piece of content, by liking, watching, commenting, sharing, skipping, or even pausing on a video, the platform receives a signal about their interests. These signals help the app decide what content to show next.

However, the algorithm wasn’t always there, at least not as we know it today. It evolved gradually as platforms grew. Back in the mid 2000s, platforms like Facebook and MySpace showed content in strict chronological order. What users saw depended purely on when it was posted, not on user behavior.

One limitation of the chronological model was that, as users followed more people and brands, their feeds became overloaded with information, and relevant content was often buried beneath a flood of newer updates.

In 2009, Facebook introduced EdgeRank, the first major algorithmic feed, which shifted the focus from recency to relevance, ranking content based on how compelling it would be to each user. By the mid 2010s, other platforms, like Instagram, had fully adopted the algorithmic feed, too.

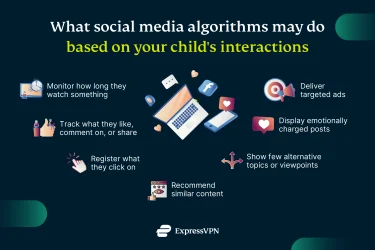

Today, social media feeds are powered by sophisticated machine-learning systems that evaluate thousands of signals in real time. Algorithms now track not just what your child "likes," but how long they pause on a photo, whether they rewatch a clip, or if they immediately scroll past a certain topic.

While each platform uses its own proprietary algorithm, there are common ranking signals that appear across many platforms. As of 2026, these signals have evolved into deep, AI-driven behavioral analysis.

- Engagement quality: While the algorithm registers every type of interaction, not all interactions are weighted equally. As a general rule, the more effort it takes for a user to perform an action, the higher the “quality” score the algorithm assigns to that post.

-

- Likes help refine a user’s interest profile and influence the type of content that appears in their feed.

- Comments and Replies signal an effort on the user’s part and are typically valued more than likes. Comments, questions, or replies that lead to conversation tend to strengthen the signal more than one-word or emoji-only responses.

- Shares are even more powerful because they act as a personal recommendation. Recent updates on platforms like Instagram have prioritized Direct Message shares as one of the most important signals for reaching new audiences.

- Watch Time and Rewatch Rate are some of the strongest signals on platforms that host video content:if a user watches a video from start to finish or rewatches it, it signals to the app that it is extremely high-value, resulting in more videos of similar nature being shown.p

- Saves signal to the algorithm that the content has long-term value or use. When a child saves a post, the algorithm assumes they intend to revisit it, prompting it to show more similar content in feeds and recommendations.

- Dwell Time: Platforms track dwell time from the moment content appears in a feed until the user scrolls past, often capturing pauses in seconds or milliseconds. For example, if your child scrolls past a video within the first 1–2 seconds, the algorithm might take that as a "negative signal.”

- Behaviour and Interaction History: If your child regularly messages someone or engages with their content, that person will likely stay at the top of their feed.

- Timeliness (Recency): While most platforms have shifted away from strict chronological feeds, when something was posted still matters. Fresh posts are given a temporary boost before engagement metrics ultimately decide how often that piece of content appears.

- Content Type: Algorithms prioritize formats users engage with most. If your child frequently watches videos to completion, the platform will likely prioritize video content in their feed.

There are also platform-specific preferences that influence how strongly certain formats are promoted. For example, TikTok favors short-form videos, Instagram boosts carousels, and YouTube traditionally rewards long-form content.

How does the algorithm work?

While the algorithm is heavily associated with social networks, it is not exclusive to them. Many other types of platforms and services rely on algorithms to tailor content to individual users.

For example, streaming services like Netflix and Spotify suggest movies, shows, and music based on viewing and listening patterns. E-commerce sites such as Amazon use algorithms to recommend products based on previous purchases.

Regardless of what platform they operate on, algorithms are not inherently harmful. Without them, users would be faced with endless streams of uncurated content, making finding relevant information especially difficult.

However, algorithms can have unintended consequences for children and teenagers,particularly on social media. By continuously showing content similar to what a user has already engaged with, they can create echo chambers. If that content is misleading or harmful, it may influence children’s understanding of the world, potentially shaping a distorted perception of reality.

Let’s go over how the algorithm works on a few major social networks.

YouTube

The YouTube algorithm tracks a range of signals, including which videos someone chooses to watch, how much of each video they view, and how they engage through likes, comments, or shares. Even small actions, such as pausing, rewinding, or skipping, help the algorithm build a profile of what a viewer finds engaging.

A major 2025 YouTube update introduced safeguards on video sequencing for teens. For one, parents have access to tools that help manage screen time. For another, the platform now actively limits how often certain types of content can be shown back-to-back, even if a teen watches or interacts with them repeatedly.

This change is designed to prevent “echo chambers,” where the algorithm continuously serves increasingly intense, repetitive, or emotionally charged videos on the same topic.

For example, if a teen starts watching videos about beauty standards, the algorithm may introduce different types of content to break repetitive patterns. After several similar videos, it injects content from totally different categories to try to prevent the child from getting stuck in repetitive or potentially harmful content loops.

These safeguards primarily apply to teen accounts and are focused on specific sensitive topics, such as body image or emotionally charged content. YouTube may use AI to determine whether someone is under 18 based on a variety of signals, like the types of videos watched and how long the account has existed.

TikTok

While many platforms rely on who you follow to create your feed, TikTok’s For You page is based primarily on user behavior. Liking, commenting, sharing, and favoriting videos all influence what appears on the feed, but watch time is the strongest signal.

If a video is watched all the way through or replayed, TikTok interprets this as strong interest and starts recommending similar content.

Instagram doesn’t have one single algorithm, but multiple AI-powered ranking systems for different sections of the app.

- Feed: The main feed (the first screen shown when opening the app) prioritizes content from accounts your kids engage with most (like close friends, family, or people they follow), using signals derived from their past interactions and behavior.

- Stories: Stories disappear after 24 hours, which makes posting time an important factor in what appears first. That said, engagement matters just as much. Stories from people you interact with frequently are more likely to show up ahead of those from accounts you rarely engage with, even if they were posted earlier.

- Reels: The Reels algorithm tracks signals such as watch time, completion rate, and rewatches. The longer your child watches a reel on a specific topic, the stronger the signal to the algorithm that they’ll want more content on that same subject.

- Explore: The Explore page shows recommendations from people you don’t follow but may find interesting based on your past interactions, with most recent and highly engaging content being prioritized.

X (formerly Twitter)

X offers two distinct timelines. The For You feed is fully algorithmic and may include posts from accounts users do not follow. The content is ranked by X’s AI model based on predicted relevance and engagement signals.

The Following feed is limited to posts from accounts a user follows and includes user-controlled sorting options, such as Most Recent or Popular.

With the updates implemented in late 2025, the For You feed was reinforced as the default experience for most users. This means that algorithmic ranking now plays a primary role in shaping what users see unless they actively switch timelines.

At the same time, the Following feed moved away from being strictly chronological by default, requiring users to manually select Most Recent to view posts in time order.

Facebook’s algorithm uses a four-step process to decide what appears at the top of your News feed:

- Inventory: The algorithm first gathers all potential posts that could appear on your child’s feed, including everything posted by their friends, the Groups they’ve joined, and the Pages they follow. It also looks at Recommended content (posts from people they don't even know but that the AI “thinks” they will find interesting).

- Signals: These are thousands of data points that Facebook analyzes to understand how relevant each post might be to each user.

- Predictions: Using signals, the algorithm makes predictions about how likely your child is to engage with each post.

- Relevance Score: Every post is given a relevance score. The higher the score, the higher it sits in your child’s feed.

What the algorithm is (and what it isn't)

Although algorithms play a major role in shaping our social media experience, they aren’t responsible for everything we see (or don’t see) online. Many other factors exist independently, but the algorithm can influence how prominently certain content appears or how often it is shown.

Bots

These are automated accounts designed to perform specific actions, sometimes mimicking human behavior. They can be used for legitimate purposes, such as helping brands with customer support, scheduling and managing content, or gathering analytics.

At the same time, bots can be misused. They may generate artificial engagement by boosting likes, comments, and shares, making posts or accounts seem more popular than they are. This can influence the algorithm into boosting misleading or harmful content, increasing the likelihood that it reaches children and teenagers.

Paid ads and sponsored posts

Paid ads on platforms like Facebook, Instagram, and TikTok operate through real-time auction systems where brands bid for ad space. Sponsored content, while labeled, is designed to blend into regular feeds.

These ads exist independently of the algorithm, but algorithms strongly influence who sees them, how often they appear, and where they are shown. By analyzing user behavior,such as clicks, viewing patterns, and device use, platforms optimize ad delivery to reach users most likely to engage.

Fake news

Fake news and misinformation are produced by individuals, organized groups, or bots. They exist independently of the algorithm. However, because these posts often have an emotional or sensational tone, they can spread rapidly, increasing the likelihood that the algorithm will show them to a larger audience.

Some platforms have implemented measures to limit the spread of false information. For example, X uses community fact‑checking (Community Notes) tools that rely on crowdsourced moderation to provide additional context for posts that may be misleading.

Platforms like Facebook and Instagram previously relied on a third-party fact-checking program, but have since begun replacing it in the U.S. with a crowdsourced system similar to the one used on X.

However, in many cases, platforms don’t simply remove content that has been identified as false. Instead, they usually reduce its visibility, attach warning labels, or provide additional context through fact-checks.

Even with these measures, the algorithm can continue to show such posts if they generate strong engagement, meaning misinformation can still reach a wide audience. This is why it’s especially important for kids and teens to check reliable sources before liking, sharing, or believing everything they see online.

Why do social media platforms use an algorithm?

Social media platforms present the algorithm as a tool designed to increase user satisfaction through personalization, but users are not the only ones benefiting from it. Platforms themselves gain in several ways from using algorithms to curate users’ feeds.

Algorithms analyze what kind of content users engage with most and use that information to customize each person’s feed. This keeps users scrolling and interacting for longer periods, which creates more opportunity for the platform to show ads.

Platforms make most of their revenue through real-time ad auctions (also known as real-time bidding) where advertisers bid to show their ads to specific audiences. Algorithms match ads to people most likely to click on them, for example, makeup brands may target people who engage with beauty content.

The more effective ads are, the more interested companies are in bidding, which, in turn, means more revenue to social media platforms.

Social media algorithms under scrutiny: Notable incidents over the years

Social media platforms have been involved in some controversies, particularly in relation to showing inappropriate content and the spread of misinformation. These controversies have prompted public debate about how algorithms operate and whether their impact is ultimately positive or negative..

Legal probe into TikTok (2025)

In November 2025, French authorities opened an investigation into TikTok. The inquiry was due to concerns that the platform’s recommendation algorithm might steer vulnerable users, including young people, toward detrimental content related to self‑harm and suicide.

The probe was launched in response to a parliamentary committee report as well as a number of complaints made by families and lawmakers. They highlighted how TikTok’s algorithm and content-moderation practices could expose minors to dangerous material and fail to sufficiently protect them.

Instagram algorithm glitch (2025)

In early 2025, Instagram’s algorithm experienced a glitch that caused some users, including teens, to see violent and graphic reels in their feeds, even with safety settings turned on. Meta, Instagram’s parent company, said the issue was caused by an error in its recommendation system and apologized after widespread complaints.

While the problem was fixed, the incident raised concerns about the platform's ability to protect minors and the challenges of moderating AI-driven algorithms.

Haugen leaks (2021)

In late 2021, former Facebook employee Frances Haugen leaked internal documents which showed that, in 2017, Facebook’s algorithm treated emoji reactions (including the angry face) as five times more “valuable” than simple likes when deciding what to show in users’ News Feeds.

While emoji reactions were just one out of thousands of factors influencing the News Feed, the documents showed the platforms’ algorithm helped amplify emotionally charged posts.

According to the leaked documents, Instagram’s own research found that roughly one in three teenage girls who were already struggling with body image reported that using the platform made their concerns worse.

The company faced criticism for prioritizing engagement over the mental well-being of its youngest users.

Cambridge Analytica (2018)

In 2018, it was revealed that, a couple of years prior, political consulting firm Cambridge Analytica harvested personal information from tens of millions of Facebook users through a personality quiz app.

The firm then used this data to build psychological profiles and micro-target political ads during campaigns, including the 2016 U.S. presidential election.

While Facebook's core feed algorithm wasn't directly manipulated, the scandal highlighted how algorithmic tracking of user behavior could be exploited for manipulation at a large scale.

Elsagate (2017)

The Elsagate term is used to refer to various videos on YouTube and YouTube Kids that appeared child-friendly but were tagged or designed to evade safety systems and attract views. These videos featured characters from children’s shows (like Elsa from the movie Frozen) in bizarre or inappropriate scenarios.

As YouTube’s recommendation algorithms favor engagement, watch time, and keyword matching, these types of videos were often suggested to kids after watching legitimate children’s videos despite being inappropriate.

The controversy prompted YouTube to change its guidelines, including banning videos depicting children’s characters in inappropriate situations.

What the algorithm does to kids (and you)

The algorithm is primarily designed to serve users content that they’re likely to find interesting, which can have both positive and negative effects on young people using social media. Let’s go over the potential benefits and risks associated with these platforms.

Are children ready for social media?

Many psychologists and child development experts advise postponing social media use for as long as possible, typically past the age of 16. Despite that, a 2018 Common Sense Media report found that U.S. children begin using social media at an average age of 12.6 years.

Children are eager to start using these platforms as fast as possible so they can connect with their peers, and parents often allow them to do so for fear of their child being left out or missing out in some way.

But beyond socializing and entertainment, many children now encounter social media through school-related activities as well, from group chats and classroom announcements to educational videos and collaborative projects.

As a result, children are being exposed to algorithm-driven feeds at increasingly younger ages, often before they can comprehend how these systems work.

From a developmental perspective, school-age children are not fully equipped to navigate social media. Between the ages of 10 and 12, children experience significant brain changes, particularly in a part of the brain called the ventral striatum.

During this period, increases in “feel-good” chemicals like dopamine and oxytocin make them highly responsive to social rewards such as praise, acceptance, and likes or comments received online.

While social validation has a similar effect on adults, the critical difference lies in the prefrontal cortex, the region responsible for complex skills like impulse control, planning, and emotional regulation, which does not fully mature until the mid-20s.

This imbalance makes children especially vulnerable to compulsive use of social media and emotional distress, especially when they measure themselves against peers who appear to be more popular or receive more validation on these platforms.

In response to this, some countries and platforms have introduced age-related restrictions on social media platforms. In the U.S, TikTok, Instagram, Facebook, Snapchat, X, and YouTube require users to be at least 13 years old to create an account. This rule is tied to data privacy laws like the Children’s Online Privacy Protection Act (COPPA).

Other countries have even stricter regulations. In December 2025, Australia enforced a minimum age of 16 for social media use, placing legal responsibility on platforms to prevent younger users from creating accounts.

However, many children manage to get around these rules. For example, they could potentially bypass age restrictions through several methods, some as simple as entering a false birthdate when creating an account.

A 2024 research commissioned by the U.K.’s Ofcom found that a third of children aged 8–17 lied about their age to use social media, with many younger users claiming to be 16 or older.

Parents can also play a part in allowing their children to circumvent restrictions on social media platforms by helping them create an account or allowing them to use their own profile. While often well-intentioned, this behavior can expose children to algorithm-driven content designed for much older users.

The benefits algorithms can bring

While often viewed through a negative lens, when used responsibly, social media and the algorithms that shape it can have a positive impact on children’s lives.

Social connection

Social media algorithms often prioritize content from friends, family, and accounts users interact with most. This helps children stay connected with people who matter to them, even across long distances, and introduces them to peers with shared interests.

According to a Pew Research Center survey of U.S. teens aged 13–17, 74% report feeling more connected to their friends' lives through these platforms.

Self-expression and creativity

Adolescence is a critical period for self-discovery and expression, and social media platforms can play an important role in that process.

When children develop creative content, be it art, music, poetry, or videos, and it receives positive engagement, the algorithm can recommend it to users who have shown interest in similar content, helping them reach audiences beyond their immediate social circles.

Education and information access

When children show interest in a specific topic, algorithms may suggest relevant educational content in engaging formats, such as short videos, infographics, and live streams.

This can be especially valuable for adolescents seeking health-related information; for example, exposure to quality fitness content can support young people in developing and maintaining healthy lifestyle habits.

For many adolescents, social media can serve as an accessible starting point for learning topics that may not be fully covered at school or openly discussed at home, such as mental health and emotional well-being.

When this information is accurate and well presented, it can help adolescents feel more informed, reduce stigma around these topics, and support them in making safe decisions about their health.

Support and community

Algorithms often recommend groups, accounts, and discussions based on shared identities or experiences. This can be especially meaningful for children from marginalized communities, such as LGBTQ+ youth or ethnic minorities, by helping them find supportive spaces and relatable role models. Seeing peers their own age navigating similar challenges can reassure children that they are not alone.

The risks associated with algorithms

Despite the benefits described above, algorithm-driven social media platforms also come with a range of potentially harmful effects that parents need to be aware of before allowing their children to use them.

Anxiety

The algorithm is designed to prioritize content that receives a lot of engagement, which can unintentionally encourage social comparison and contribute to anxiety in young people.

Children may feel inadequate when they compare themselves to peers who receive more validation through likes and comments, and they may feel pressured to create content that performs better. When the feedback they receive doesn’t match their expectation, it can negatively affect their self-esteem and provoke angst.

Young people often compare themselves to others whose lives appear to be better, whether due to physical appearance or lifestyle. This can trigger fear of missing out (FOMO), a feeling of anxiety or unease that arises when individuals believe others are having rewarding experiences without them.

Addiction

Algorithms are designed to keep users engaged by continuously presenting new content, often amplifying emotionally charged or sensational posts. For children, whose self-regulation is not yet fully developed, this can easily contribute to excessive and compulsive use of social media.

Research consistently shows that frequent social media use can activate the brain’s reward system by triggering dopamine release, in ways that share similarities with other habit-forming behaviors.. While this effect can also occur in adults, teens are especially vulnerable due to their still-developing prefrontal cortex.

Echo chamber

The algorithm analyzes children’s past engagement to show them more of what they already engage with. This reinforces their beliefs and makes alternative view points less visible over time,a phenomenon commonly referred to as an echo chamber.

For young users who are still developing critical thinking skills, this can lead to more one-sided or extreme opinions about certain topics that can be harmful or problematic. The risk is particularly high when these opinions form around divisive or sensitive topics like politics, social issues, or health.

Other than beauty standards and dieting trends, echo chambers for girls may also form around luxury, travel, or seemingly perfect influencer lifestyles. For instance, algorithms may repeatedly promote content that shows glamorous trips and expensive purchases, which can, over time, make teens feel like they are missing out and increase feelings of comparison or dissatisfaction with their own lives.

Another example is algorithms promoting “alpha male” influencers. This can reinforce rigid or potentially harmful views about gender roles.

Exposure to inappropriate content

Most social media platforms use AI tools to remove violent or inappropriate content, but some of this content can still slip through the filters and reach users.

If a child engages even slightly with borderline content (content that is not clearly illegal, but may still be harmful), the algorithm may recommend similar content, including fully inappropriate posts.

Taking control back

Before assessing whether your child may be affected by algorithm-driven content, it’s important to understand what a healthy social media experience looks like. This can also help you recognize the warning signs that may indicate unhealthy social media behavior.

What does a “healthy” algorithm look like?

For children, completely avoiding social media is often unrealistic, so parents and caregivers may choose to focus on making their time on these platforms as safe and positive as possible.

So, what should a healthy algorithm look like?

At its core, a healthy algorithm should reflect your child’s interests without limiting them to a narrow range of content or constantly pushing sensational or harmful content. Ideally, their feed should show a mixture of entertaining, educational, creative, and age-appropriate content. But how can this be achieved?

Because algorithms learn from behavior, creating a healthy algorithm starts with healthy social media habits. What children watch, like, search for, and how long they engage with certain content all shape what the algorithm shows them next.

Here are a few examples that suggest the algorithm is delivering a healthy and positive stream of content to your child’s feed:

- Diverse content: Your child’s feed shows a healthy variety of topics and perspectives instead of being dominated by one or two topics.

- Positive tone: After using social media, your child seems inspired and entertained instead of anxious, angry, or confused.

- Creativity over consumption: A healthy feed should inspire your child to put their phone down and do something creative, whether by trying a tutorial they’ve watched or exploring a new hobby inspired by a favorite influencer.

- Age-appropriate content: Material that’s adequate for your child’s age and developmental stage is prevalent, while inappropriate content is either absent or kept to a minimum.

- Meaningful interactions: You notice your child messaging friends or family more often than you see them endlessly scrolling through their feed.

How to spot algorithm-related red flags in your child

When a child’s relationship with social media is unhealthy or overly influenced by the algorithm, you may notice the following common behavioral red flags.

Irritability when away from devices

If your child becomes anxious or upset when they’re unable to check their phone, it may indicate an unhealthy dependence on social media.

Algorithms are designed to keep users engaged through unpredictable rewards, repeatedly delivering content they are likely to enjoy. This creates a continual loop of anticipation, leaving users, particularly young people, constantly craving an entertaining piece of content or expecting a digital interaction.

Less time spent on offline activities

If you notice your child spending less time on things they used to enjoy, whether it’s hobbies, sports, or hanging out with family, it’s another sign that social media is likely taking much more of their time than necessary.

When social media becomes more rewarding than real-world experiences, it may indicate that algorithm-driven content is capturing and holding their attention too strongly.

Excessive comparison to others

Many young people tend to compare their own lives to the carefully curated content they see online, which can leave them feeling inadequate, like they aren’t doing enough, or that they’re missing out.

If you notice your child becoming more self-critical, withdrawn, or unhappy after spending time on social media, it may be a sign that these comparisons are negatively affecting their self-esteem and emotional well-being.

Frequent mood swings

Frequent exposure to emotionally intense content on social media has been associated with mood fluctuations and increased emotional sensitivity, especially in children and adolescents.

If your child experiences frequent mood swings, such as intense highs from viral content followed by crashes triggered by comparisons or negative posts, this may indicate that their feed is unbalanced and amplifies emotional extremes.

Short attention span

Short-form content like Instagram Reels and TikTok videos are designed for quick, engaging consumption. While occasional exposure to this type of content is unlikely to cause harm, prolonged exposure has been associated with reduced attention spans in users.

For young people, whose brains are still developing, this can lead to difficulties concentrating, reduced patience, and even lower academic performance.

Altered sleep habits

By design, algorithms are meant to keep us scrolling and interacting for as long as possible, which can interfere with sleep. For children and adolescents, who require more sleep for healthy growth and brain development, this disruption can have more serious consequences than for adults.

Lack of adequate rest can affect mood, attention, memory, and academic performance. Over time, it may contribute to increased stress and anxiety. If your child is staying up late on their phone or waking during the night to check it, this may be a sign that they have become too dependent on social media.

What you can do to reduce the risks

As a parent or caregiver, there are various steps you can take to help minimize the risks of algorithm-driven content for children.

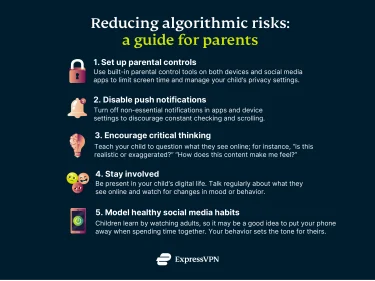

Set up age-appropriate filters and parental controls

Many social media platforms offer parental control settings that allow you to restrict content based on age or block inappropriate material.

The exact features vary by platform, but in general, parents can often set daily screen time limits, filter content that includes certain keywords, and control who can message, comment on, tag, or follow their child. Depending on the platform, parents may be able to see who their child is interacting with, though they typically cannot view the content of private messages.

Before setting up these safety measures, it is advisable you discuss them with your child and explain why they are being set. Open communication can reduce conflict and make children less likely to try to work around the rules.

Disable or limit push notifications

Push notifications, alerts sent to your child’s device even when the app isn’t open to alert them that they have a new message, like, or comment, can contribute to excessive social media use by prompting frequent checking.

These notifications can usually be turned off or limited through the device’s settings or within the app itself, helping prevent children from being constantly drawn back online.

Model healthy social media habits

Children often mimic adult behavior. Demonstrating responsible social media use yourself, like putting your phone aside during meals, limiting scrolling time, or prioritizing face-to-face interaction, can encourage similar habits in your child.

Encourage critical thinking

Explain to your child how the algorithm works and why certain types of content get recommended. Talk with them about how posts often aim to provoke strong emotions and gain engagement rather than present the truth. Encourage them to ask questions like: Was this post made by a trusted site? Is it trying to cause a reaction?

This can help your child develop the ability to question what they see, recognize misinformation, and identify exaggerated or extreme viewpoints.

Be involved in their digital world

Show interest in the platform your child uses as well as the people and sites they follow. This can give you insight into their online habits and the content they’re exposed to. You could also suggest educational or positive creators they can follow and advise them to unfollow accounts that trigger negativity.

Adjust boundaries

While all these strategies can help you reduce the harmful effects of algorithm-driven content on your child, they raise an important question: where is the line between children’s privacy and protecting them from online risks?

While most people would agree that a child’s safety is a top priority, it is important to recognize that children, just like adults, have a right to privacy. Overly intrusive monitoring can often lead to privacy invasion and have the opposite effect:it may encourage secretive behavior rather than safer habits.

To find the right balance, it’s important that parents adjust boundaries as children mature. For younger children, closer supervision and stricter controls may be appropriate. For adolescents, transparency in why parental control measures are implemented is key in building trust and mutual understanding.

Open conversations about the dangers of social media can help your child understand that implementing these measures is about protecting them rather than asserting control. This approach may help your child reflect on their choices, understand the consequences, and develop healthier online habits over time.

Fighting the algorithm

There are several actions that children can take themselves to reduce harmful or addictive content in their algorithmic feeds and turn them into a more positive, healthy space. The challenge is that many children are not aware of the dangers or the things they can do to prevent them.

We created the following section for you, to facilitate a conversation about this with minors under your care. If you want to, you can use it as a script to talk to your teen about the possible harmful effects the algorithm can have on them. It also comes with a small quiz so you, and, should you choose to, your kid, can test how much you learned about algorithms.

Talking about it with your kid: A script you can use

Have you ever noticed how hard it can be to put your phone down sometimes? One reason is that your favorite social media platforms rely on algorithms, that is, sets of rules that track what content you engage with and then show you more of the same.

At first glance, that may not seem like a bad thing. The issue is that an algorithm’s primary goal isn’t just to keep you entertained or informed, but to keep you scrolling for as long as possible. It may show you content that can leave you feeling anxious, overwhelmed, or inadequate over time.

The good news is that algorithms aren’t entirely out of your control. You can train the algorithm to display content that is not only fun, but also interesting and positive. Turning your feed into a healthier online space where you can have a good time is fairly easy. Do you want to learn how?

Here are a few steps you can take to avoid falling into the algorithmic trap.

Be careful what you like, watch, and share

Every interaction you have on social media sends a signal to the algorithm about what you want to see more of. Quickly scrolling past a post tells it you’re not interested, and this can help reduce similar content in your feed. On some apps you even have a “show fewer posts like this” or similar button. We’ll get to it a bit later.

On the other hand, watching (especially in full), liking, commenting on, or sharing a post strongly signals interest, making it more likely you’ll see similar posts again. If a certain type of content makes you feel anxious or upset, the best response is often no response at all; simply scroll past and move on.

Use the “Not Interested” button

Many apps let you tap options like “Not interested”, “Hide”, or “Show less of this.”

If you strongly dislike something you see, using these tools sends a clear signal to the algorithm that you don’t want that kind of content anymore.

Don’t be afraid to say you’re not interested;the algorithm will usually suggest other types of content for you, which you may actually enjoy and benefit from.

Mix up what you watch

If you focus on just one type of content, the algorithm can place you in a “filter bubble” and show you the same ideas and perspectives all the time. While this can seem normal or feel good at first, it also makes it difficult to come across other opinions or different topics.

For example, if you regularly watch videos about dieting or fitness, the algorithm may start showing more and more content on those topics. And if it is all you see when you open the app, you can get pulled in quickly . It can be hard to tell when the content you see starts getting too intense and is no longer healthy for you.

To avoid this, you can intentionally search for things you don’t usually watch, like a different hobby, or a sport you’ve never tried. This may help diversify what the algorithm shows you. Also, avoid engaging with Recommended content too much. Instead, check to see what the people you are actually following are posting.

Remember: learning about new things and different perspectives is good;there’s a whole world out there for you to discover.

Turn off autoplay

On many social media platforms, videos play automatically as you scroll without you pressing play. This can sometimes lead you to watch content you didn’t mean to see.

You can usually turn off this feature, and that gives you more power. This way, even if an unexpected video appears in your feed, you get to decide if you watch it instead of the algorithm making that choice for you.

Don’t believe everything you see

Algorithms aren’t designed to check whether something is true; they care about getting a reaction. That’s why provoking content often appears in your feed. Before liking or sharing a post, consider whether the information is realistic and whether it comes from a trusted source.

Keep in mind that posts can be intentionally misleading, or even completely false, simply to grab your attention.

Take breaks

If you notice yourself scrolling endlessly without finding anything enjoyable or meaningful, it’s a good idea to put your phone down and focus on offline activities. You can try playing sports, reading, drawing, or taking on a new hobby.

This can give your mind a break from the constant stream of content, help you feel more in control of your time, and let you engage with things that genuinely make you happy and relaxed.

Talk about what you’re seeing

If something online confuses or upsets you, talk to a parent, teacher, or trusted adult. Algorithms don’t take your feelings into account, but people do.

They can help you make sense of what you’ve seen, put things in perspective, and guide you on what’s safe to engage with. Remember, it’s always okay to step away from content that feels overwhelming or upsetting; your well-being comes first.

Test your knowledge about the algorithm

Now that you’ve read our guide on how to navigate social media and algorithms, you can answer this small quiz and show off what you learned.

Empowering kids to use social media wisely

Social media algorithms aren’t going away, but understanding how they work can help you and your child stay more in control. By recognizing the signs of algorithm-driven content, like repeated extreme posts, echo chambers, or pressure to compare, parents can support healthier online habits.

The goal isn’t to eliminate social media, but to use it in a way that keeps the positives while reducing the risks. With awareness, boundaries, and open communication, kids can learn to outsmart algorithms and build a safer, more balanced relationship with the platforms they use every day.

Take the first step to protect yourself online. Try ExpressVPN risk-free.

Get ExpressVPN