UK's Online Harms White Paper casts a cloud on free speech

The UK government has introduced a new regulatory framework that seeks to push companies with online platforms to regulate “harmful” material posted on their websites.

The Online Harms White Paper is determined to make the UK “the safest place in the world to go online,” but it fails to adequately consider the fundamental rights of online users in the process.

What is the white paper proposing?

The 102-page white paper, jointly published by the Home Office and the Department for Digital, Culture, Media, and Sport, proposes that “harmful content” on online platforms is the responsibility of the hosting company to take down, or else face hefty penalties and fines. In other words, Facebook will be held responsible for your status update.

The mandatory content rules will be enforced by a yet-to-be-defined independent regulator, who will have the power to issue fines, block access to noncompliant sites, and even hold a company’s senior management liable for allowing “harmful” content on its platform.

Vague definitions of key terms

While the paper describes at length the various “reasonable steps” companies would be required to take, it does a poor job of describing what is actually meant by “harmful content.”

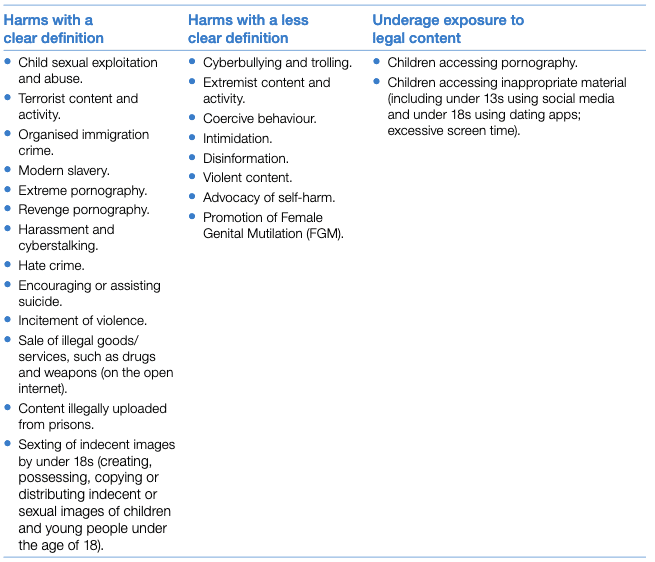

The proposal lists a table of online harms the government wants regulated.

Of particular concern is harmful content, such as trolling and cyberbullying, that is not illegal in the UK, even if it may be unwanted.

As Jim Killock, executive director of the Open Rights Group, told the Daily Mail, “We’re talking about banning content that the government won’t make illegal—it won’t legislate to ban it, but it wants companies to do so.

“They're saying, ‘We don't like Facebook, so we're going to give Facebook more power to regulate our content more,’ it's a terrible irony.”

There is also ample room for the government to expand the scope of what it considers to be harmful, regardless of that content's legality, and it can seemingly do so without any meaningful resistance. The independent regulator put in charge will “set out steps that should be taken” to “tackle cyberbullying,” but this process is not explored at all.

To top off all this uncertainty, legal-yet-harmful content would be restricted online but remain legal to publish offline. There is no explanation as to how this might be applied.

One thing seems clear, however: The fuzziness of the language in the white paper leaves this legislation ripe for online censorship and abuse.

No discussion of fundamental rights for online users

The proposal fails to properly discuss protections of fundamental freedoms of expression and due process.

To be fair, the paper lists freedom of expression as a core value, and it requires the independent regulator to “take particular care not to infringe privacy or freedom of expression.” But in spite of the apparent value placed on free expression, the paper does not attempt to explore how the regulator should protect it.

There is also no discussion about due process or any acknowledgment of problems that could arise from the proposal.

Sweeping regulation affects platforms of all sizes

Lastly, the proposal puts all companies that “enable or facilitate users to share or discover user-generated content” responsible for the content on their platforms, including start-ups, small-and-medium enterprises, and even charitable organizations.

To avoid being penalized, companies will likely introduce proactive measures, such as upload filters to prevent “harmful content” on sites.

Draconian as they are, filters are a luxury only the biggest companies can afford. And without the means to adequately police harmful content, the extra costs that smaller companies must bear to build highly regulated platforms is discouraging enough to stifle the growth of future online platforms.

White paper in consultation stage—have your say

The white paper is the first stage in making the proposals a legal reality. The UK government says it will seek advice from "legal, regulatory, technical, online safety and law enforcement experts” until July 1, 2019.

If the government wants to increase online safety, it may do so at the peril of fundamental freedoms that are barely mentioned in the paper. And this may be deliberate, as UK-based digital campaign organization Open Rights Watch warns:

“Governments both repressive and democratic are likely to use the policy and regulatory model that emerge from this process as a blueprint for more widespread internet censorship.”

If you want to respond to the Online Harms White Paper with your view, you can respond to it through this link or with this email.

Take the first step to protect yourself online. Try ExpressVPN risk-free.

Get ExpressVPN