The history of the internet: From its start to where it is today

The internet has become an integral part of our lives more rapidly than anyone could have ever imagined. In the late 1990s, it seemingly appeared out of nowhere and took the world by storm. Today, being connected to the internet is a part of everyday life for billions of people around the world. The internet was an overnight success, but by the time the Dotcom bubble burst, it was already close to 40 years old.

[Want tips for protecting your devices? Sign up for the ExpressVPN blog newsletter.]

Have you ever wondered how we got to where we are today?

Like many other innovations of the Cold War era, the development of the internet was driven by weapons and space travel. The computers that began to control these systems needed to be built to communicate with each other, be more resistant to outages, and most importantly, function without a single point of failure prone to sabotage or a weapon strike.

The threat of mutual annihilation required both the Soviet Union and the United States to maintain “second strike capability,” meaning the ability to efficiently launch weapons even when already being hit by a devastating attack. This cynical thinking required the existence of a network that could heal itself and allow any remaining parts of it to keep functioning. The system needed to be possible to be spun back on from anywhere, by anyone.

When did the internet start?

The first concept paper that discussed a theory of computer networking to replace the current version was written by J.C.R. Licklider of MIT in August 1962. This paper was the theoretical framework that the internet needed to get off the ground — it put forward a vision of a global interconnected network with multiple access points so that anyone on the network could access an infinite amount of data and apps.

Licklider was at the helm of the Advanced Research Projects Agency (ARPA), which was tasked by President Dwight D. Eisenhower to close the gap with the Soviets and prepare the U.S. for rapid advancements in military and space technology.

Licklider played a pivotal role in convincing his peers about the importance of this “galactic networking” concept. The concept gained further credence by the “packet transfer” theory, published by researchers Leonard Kleinrock and Lawrence Roberts.

Under the packet transfer network, data would be broken up into smaller nuggets, each of which would travel separately to its destination. It would then be reassembled at the other end in the correct order into the original piece of information.

Packet transfer (known as packet switching) is now the default method of data transfer across the internet, but initially had to prove itself against another idea: circuit switching. This concept, which is still at times used for telephone systems, requires the setup of a dedicated channel, a circuit, between the sender and the receiver, which is only closed when the data transfer is complete. While the circuit is open, no other data can be transferred alongside it.

To validate the theory that packets were a more efficient and robust method, researchers connected two computers: the TX-2 computer, located in Massachusetts, and the Q-32 in California, using a dial-up telephone line.

The results confirmed initial suspicions. The networked computers performed well together with the ability to retrieve data and run applications, but the circuit switching was slow, cumbersome, and deemed to be woefully inadequate.

At the same time, the U.S. government had awarded contracts to the RAND corporation asking it to come up with a proof-of-concept for secure voice and communication lines. The consulting body, operating independently of ARPANET, also identified packet switching as the best mechanism for the purpose.

Final validation came in the form of a paper on the packet network concept by UK-based researchers Donald Davies and Roger Scantlebury. Since all the researchers had come to their conclusions without consulting each other, it proved that packet transfer was indeed the way forward.

The move towards securing communication networks was formalized under the umbrella of ARPANET. Early routing devices to engender large-scale data transmission via packet transfer were called Interface Message Processors (IMPs); they were developed by military contractor Bolt, Beranek, and Newman (BBN) in 1969.

Today, packet switching makes it easy to send the data of millions of concurrent users through a single dedicated line. With ease you can communicate with multiple servers at the same time, listen to music while you browse your emails and your download can resume shortly after you turn on your VPN.

History of ARPANET

Leonard Kleinrock’s work in the packet transfer theory meant that public trials of ARPANET involved his Network Measurement Center at UCLA. In the first phase of ARPANET, IMPs were installed at UCLA and at the Stanford Research Institute (SRI). The two nodes were connected and the first host-to-host messages were successfully transmitted to one another. Users at UCLA logged in to Stanford’s systems and were able to access its database, confirming the existence of a wide area network.

By the end of 1969, ARPANET comprised a total of four nodes with two more added at UC Santa Barbara and the University of Utah.

By the end of 1970, ARPANET had expanded to a level where users could develop applications for the benefit of the entire network. A public demonstration of ARPANET took place at the International Computer Communication Conference (ICCC), where the initial host-to-host protocol, called the Network Control Protocol, was also revealed.

The first application written for the system was a rudimentary email client developed by Ray Tomlinson of BBN in order to help developers working on ARPANET coordinate with one another. Email exploded in popularity as the number of people using ARPANET grew, and expanded functionality meant that users could forward, archive, and arrange messages much like the modern email clients of today.

Interconnected networks are born

One of the fundamental principles of the internet today is the concept of distributed architecture. The internet cannot be controlled by a central node and is architecture-agnostic, meaning that different networks with different system architecture can still communicate with one another and enable seamless data transfer.

ARPANET wasn’t designed for interconnectivity. Users within its network would be able to share data and applications but any other network attempting to connect to it would be prevented from doing so.

The development of the Transmission Control Protocol/Internet Protocol (TCP/IP) was fundamental to the open architecture networking that we see in use today. Work on this concept began in 1972, with the idea to promote the stability and integrity of the burgeoning internet.

Walled-off networks require every participant to be ‘authorized’ to access the network. This created theoretical as well as practical problems. Who could be trusted with maintaining the role of the ‘door guard’ and how could this entity be prevented from monopolizing access, out of reach for educational institutions or the general public?

And if such an entity were to fail or be unreachable, the internet would have essentially been turned off, contrary to the objectives the internet was attempting to achieve. The solution was to keep the internet as open as possible. Still today, anybody can practically connect to it and serve any content they want.

In fact, it was the popularity of early applications like email that gave researchers an insight into how people could communicate and collaborate with each other in the future. If closed-off networks became the norm, then the internet would never be able to have a truly seismic effect.

Applications built for one network would be useless for another and some would benefit at the expense of others. The key concept was to promote a unified playing field, a general infrastructure which new applications could be built on top of.

From AOL to Facebook, many corporations have attempted to build a wall around the internet for profit. Others have resisted this. The open nature of the internet has proven to be highly beneficial, encouraging collaboration even among fierce competitors.

Google, Microsoft, and Apple all collaborate on developing HTML, encryption, web languages, and other standards, because a more secure and vibrant internet helps them all. In turn, this also benefits millions of smaller organizations that can easily reach a global audience with their personal website or application.

TCP/IP enters the fray

The protocol used by ARPANET, the Network Control Program (NCP), required the network to be responsible for the availability of the connections within. This created points of failure, but also made it difficult to imagine ARPANET to scale to millions of separate devices.

The new TCP/IP protocol, contracted to Stanford, BBN, and UCL, made each host responsible for its own availability. Within a year, the three teams had come up with three different versions, all of which could interoperate with one another.

Further research done by a team of researchers at MIT headed by David Clark sought to apply the TCP/IP protocol on early versions of the personal computer, namely the Xerox Alto and later on the IBM PC.

This proof of concept was critical: it showed that TCP/IP was interoperable and that it could be tweaked to meet the system capabilities of personal computers.

The early versions of transmitting data via TCP/IP made use of a packet radio system that relied on satellite networks and ground-based radio networks for seamless transfer. However, this approach was limited in the amount of bandwidth available, making transfer speeds slow. Ethernet cables, developed by Robert Metcalfe of Xerox, were the answer to the bandwidth conundrum.

The impetus behind the development of Ethernet was Xerox’s requirement to connect hundreds of computers in its facility to a new laser printer under development. Metcalfe was entrusted with this responsibility: He took it upon himself to build a new method of data transfer, fast enough that it could connect hundreds of devices simultaneously and assist users in real time.

Ethernet took approximately seven years to become the widely accepted technology it is today. Metcalfe’s work helped it become an IEEE industry standard in 1985 by significantly increasing upload and download speeds.

By New Years Day 1983, NCP was made obsolete. The TCP/IP suite also introduced the now-iconic IPv4 (and later IPv6) addresses in use for the foreseeable future. For many, this date marks the beginning of the modern internet.

By the mid-1980s, ARPANET had begun the transition away from NCP to TCP/IP protocols. While still the dominant internet network at the time, the shift to TCP/IP meant that ARPANET could be used by a far wider community of researchers and developers, and not just those in military and defense.

Email had proven to be one of the earliest use cases of the burgeoning internet, and the gradual adoption of TCP/IP protocols helped to supercharge its growth rate. There was rapid growth in the number of email systems, but all of them had the ability to send and receive electronic messages between one another.

Domain Name Servers help scale the web

Early internet networks such as ARPANET were limited in the number of hosts and therefore easy to manage. Hosts were assigned names, rather than numeric addresses, and the limited scope meant it was possible to maintain a database of the hosts and their associated addresses.

However, this model wasn’t scalable, and as advancements in Ethernet and LAN technology brought substantial growth in the number of hosts, there was a need to come up with an alternative system to classify host names.

Paul Mockapetris of the University of California at Irvine invented the Domain Name System (DNS) in 1983. DNS, a decentralized naming system for computers and services connected to the internet, helped locate and identify hosts wherever they were in the world. It also assisted in categorizing hosts according to sector, such as news, healthcare, government, education, non-profit, and more.

Advancements in router technology also meant that each region connected to the internet could establish its own architecture via the Interior Gateway Protocol (IGP). Individual regions would connect to each other via the Exterior Gateway Protocol (EGP); in the past this was not possible because routers depended on a single distributed algorithm that didn’t allow for differences in configuration and scale.

The time was right to help bring the internet to the mainstream.

The JANET network—the UK government’s answer to a centralized system to facilitate information sharing within universities in the country—was one of the first steps in this direction. Before the adoption of JANET, universities in the UK relied on their own individual computer networks, none of which were interoperable with one another.

JANET also helped policymakers understand that the internet could have a far-reaching impact, beyond just the use of military and space technologies.

The U.S. government was quick to follow suit as it established NSFNET, an offshoot of the National Science Foundation. NSFNET was the “first large-scale implementation of internet technologies in a complex environment of many independently operated networks and “forced the internet community to iron out technical issues arising from the rapidly increasing number of computers and address many practical details of operations, management and conformance.”

In layman’s terms, it brought together disparate systems scattered across the U.S. under a unified banner, giving them the ability to communicate and transfer data.

For all practical purposes, the birth of NSFNET was the foundation of high-speed internet in the U.S. The number of internet-connected devices in the country grew from 2,000 in 1985 to more than 2 million in 1993. The growth of broadband internet in the U.S. can be directly attributed to NSFNET’s decision to make it mandatory for universities to expand internet access to all qualified users without discrimination.

Another crucial decision was to use the TCP/IP protocol across the NSFNET network. The credit for this farsighted move goes to Dennis Jennings, who moved from Ireland to spearhead the NSFNET program. Jennings’s successor, Steve Wolf, made another prescient decision, of encouraging the growth of wide area networking infrastructure and to make the program financially self-sufficient, weaning it away from its reliance on federal funding.

NSFNET’s stratospheric growth and use of the TCP/IP protocol meant that ARPANET was officially disbanded in the early 1990s. Public demand for personal computers and internet connections reached a fever pitch as private companies built their own networks, using TCP/IP to connect with one another.

Tim Berners-Lee and the start of the public internet

Tim Berners-Lee, widely known as the person who brought the internet to the public realm, was a software engineer at CERN, the European Organization for Nuclear Research, when he was tasked with solving a unique problem.

Scientists from all over the world would come to the research facility, but often would have difficulty sharing information and data. The only way to do so was via File Transfer Protocol (FTP). If you had a file that you wanted to share, you had to set up an FTP server while others interested in downloading it had to use an FTP client. The process was unwieldy and unscalable.

“In those days, there was different information on different computers, but you had to log on to different computers to get at it. Also, sometimes you had to learn a different program on each computer. Often it was just easier to go and ask people when they were having coffee,” explained Berners-Lee.

The engineer was acutely aware of fast-developing internet technologies and thought it could provide the answers he was looking for. He began work on an emerging technology called hypertext.

Berners-Lee’s initial proposals weren’t accepted by his manager, Mike Sendall, but by October 1990 he had tweaked his ideas. These would later be fundamental to the growth of the modern web. Specifically, he wrote the basis for:

- HyperText Markup Language (HTML): The standard language used to make websites and web applications.

- Universal Resource Locator (URL): A unique address used to identify resources on the web. It also allows for the indexing of web pages.

- Hypertext Transfer Protocol (HTTP): A protocol that is used to transmit data.

The very first website was coded by Berners-Lee, available on the open internet by the end of 1990. The next year, people outside of CERN were invited to be a part of new web communities, and there was no looking back.

The proliferation of the global internet community is something that we take for granted today, but that wouldn’t have been possible without the intervention of Berners-Lee, who lobbied hard to ensure that the code for his discoveries would be available to the public on a royalty-free basis forever.

In 1994, Tim made a career move to MIT to start the World Wide Web Consortium (W3C), where he remains to this day. The W3C is an international community that’s dedicated to the open and unharnessed web and strives to develop decentralized, universal standards that can help the internet permeate all parts of the globe.

Because of the work of Berners-Lee, we benefit from the following standards:

- Accessibility: Nothing on the internet can be controlled by a centralized node, i.e., there is no need to ask permission before posting on the web. Censorship and surveillance are not native functions of the web and have to be applied either on the edges (by ISPs and firewalls) or by those hosting your content (such as social media sites).

- Non-discrimination: The concept that two packets are always treated the same, no matter their content, origin, or destination. See also: Net neutrality.

- Universality: The internet should cater to everyone, regardless of a user’s hardware or political beliefs. Hence, all devices involved have to be able to speak to one another.

- Consensus: The adoption of universal standards by all stakeholders across the globe so that no corner of the internet can be considered “walled-off.”

All of these standards repeatedly find themselves under attack. They continue to have to be actively defended and fought for.

Encryption becomes an integral part of the internet

Notice that little padlock on your browser next to the website address? That indicates the connection between you and the site is encrypted, making it difficult for the government or your internet service provider to decipher what you do on the web.

Encryption makes everyday activities like e-commerce, video calls, and logging on to email servers far more secure. While it’s now considered to be an internet staple, with public outcry over services that aren’t encrypted, encryption wasn’t always considered to be an inalienable part of the interwebs.

Encryption refers to the process of converting data into a form that is unrecognizable and unreadable to anyone but the intended recipient. That means nosy third parties such as hackers, governments, or ISPs can’t intercept the data and snoop on its contents.

The etymology of the word comes from the Greek word “kryptos,” which means hidden or secret. Cryptography itself dates back thousands of years, with civilizations such as ancient Greeks and ancient Egyptians relying on cryptographic methods to relate sensitive information primarily in a trade and military context.

In the context of the internet, however, cryptography was primarily the domain of the government and spy agencies until Whitfield Diffie introduced the concept of “public key cryptography,” in 1975.

Before Diffie’s groundbreaking discovery, data was secured in a “top-down” approach. This meant files were protected by passwords and then stored in electronic vaults controlled by system administrators. The problem with this approach was that security was up to the whims of the administrators themselves, who often had little to no incentive to safeguard private information.

“You may have protected files, but if a subpoena was served to the system manager, it wouldn't do you any good,” Diffie noted. “The administrators would sell you out, because they'd have no interest in going to jail.”

Diffie, inspired by the book The Cryptographers, took his passion to Stanford where he attempted to come up with a solution. He understood that the most logical answer would be a decentralized system where recipients would hold their own keys, without relying on a third party, but he lacked the mathematical nous to engineer it on his own.

Diffie knew there were serious applications of this technology. He foresaw a future where all forms of business could be conducted electronically, using “digital” signatures. Public-key cryptography, revealed in 1975 after collaborating with Martin Hellman, was the radical breakthrough the encryption industry needed.

The concept of public-key cryptography hinges on two elements. Every user possesses two keys: a public key and a private one. The public key can be distributed to anyone interested in encrypting messages for you or verifying your signature. The private key is held by you only and is used to decrypt messages as well as sign them.

Diffie’s concept received a boost in 1977 with the creation of the RSA encryption algorithm. Invented by three MIT scientists—Rivest, Shamir, and Adleman, RSA was an alternative to the government-sanctioned Data Encryption Standard (DES), a notoriously insecure encryption standard that didn’t use public keys and was limited to keys 56 bits in length.

RSA could work with a much larger key size, thereby making it far more secure. Plus it used public keys and was scalable to an extent that would serve the needs of the burgeoning crypto community.

The problem with RSA was that all of its algorithms were patented to RSA Data Security. While the company said its mission was to promote privacy and authentication tools, the fact that the rights to such an important technology were held by one organization was unacceptable to activists known as cypherpunks.

Cypherpunk, a portmanteau of cipher and cyberpunk, began as an online community from a small mailing list in 1992. Early cypherpunks were obsessed with topics such as privacy, snooping, and government control over the free flow of information. They didn’t want cryptography to be the domain of a single actor, whether a corporation or a government.

The cypherpunks believed that the internet would be a liberating tool that could allow humans to interact without authoritarian interference. They believed that the free flow of information would topple authoritarian regimes all over the world, or at least make it possible to hold them accountable. The cypherpunks were not only obsessed with making information impossible to track, control, and censor, but also creating electronic money outside of the reach of Central Banks.

The answer to their dilemma came with the work of Phil Zimmerman, an anti-nuke campaigner who believed that privacy was too important to be left unaddressed. A talented coder in his own right, Zimmerman believed it was possible to create a public-key system on personal computers using RSA algorithms.

He chipped away at his ideas, and in 1991 he came up with “Pretty Good Privacy” or PGP. With PGP, messages were converted into unreadable ciphertext before transmitted over the internet. Only the intended recipient has the key to convert the text back into a readable format. What’s more, PGP protocols also verified that the message was not tampered with in transit and that the sender was who they claimed to be.

Secured by RSA encryption, PGP spread like wildfire across the internet and became a darling of the privacy community.

The global rollout of PGP also played a major role in preventing the U.S. government from promoting the Clipper Chip, a new chipset developed by the NSA but one which incorporated a government backdoor.

Clipper Chip’s underlying cryptographic algorithm, called Skipjack, enabled a feature that allowed the government to intercept any conversation while in transit. Thankfully, Clipper Chip was universally decried by privacy advocates including bodies such as the EFF. It never reached a semblance of critical mass, and backdoors never really got off the ground.

As we mentioned earlier, the mid-1990s marked a flurry of developments in the rollout of high-speed broadband internet and consumer products such as browsers and web languages. Encryption was no different: 1995 saw the release of the first HTTPS protocol, dubbed “Secure Socket Layer,” or SSL.

HTTPS—which preserves the privacy and integrity of data while in transit and assists in protecting against things like man-in-the-middle attacks—got an upgrade with the implementation of “Transport Layer Security,” or TLS, in 1999. Although HTTPS is now used by most websites, the protocol took a while to gain mass acceptance.

Porting sites over to HTTPS was a challenge as it cost both money and time. Many webmasters didn’t see the benefits of HTTPS protocols outside of e-commerce and banking contexts. However, concerted efforts from the likes of Mozilla, WordPress, and Cloudflare systematically lowered the barriers to entry for HTTPS by removing both the cost and technical prohibitions.

In 2015, Google announced it would start preferring HTTPS secured sites in its search results, declaring a secure web to be a “better browsing experience.” In 2017, more than half of all websites were secured by HTTPS, a number that’s swelled to nearly 85% today.

Search engines and web browsers emerge

Archie, short for “archives,” was the first ever internet search engine. Programmed by Alan Emtage and Bill Heelan of McGill University in 1990, the application helped build a database of web file names. While not terribly efficient, it provided a prescient indicator of how to archive and retrieve information from across the internet.

Early search engines weren’t able to crawl the entire web. They relied on an existing index in the same vein as telephone books. Users were encouraged to submit their sites to the search engine in order to expand the central repository.

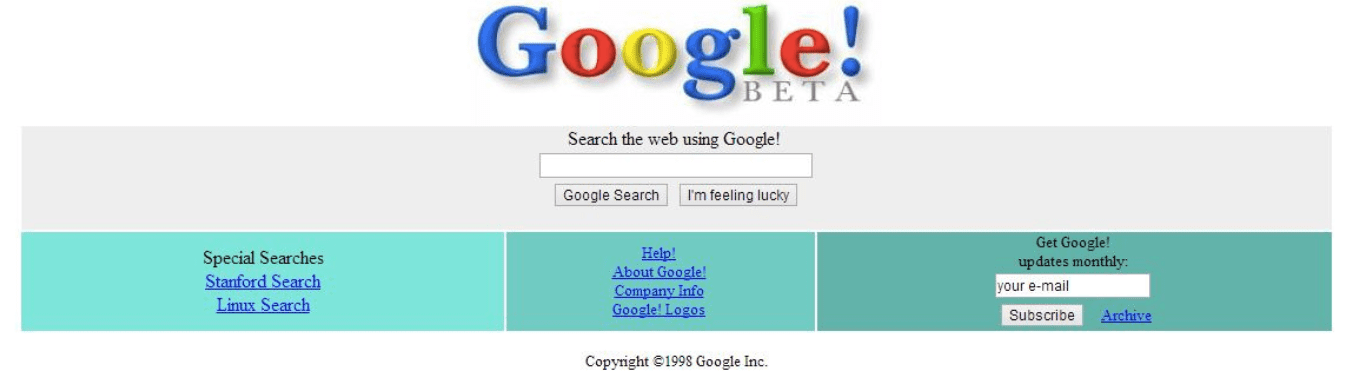

The first search engine to actively use web crawlers was called WebCrawler. And before they launched Google, Sergey Brin and Larry Page worked on a search algorithm called BackRub, which took into account backlinks for search.

Google officially launched in 1998 with a superior crawling algorithm to its competition. Slowly others like Lycos, Ask.com, and Yahoo began to fall by the wayside.

The popularity of search engines grew following the decision of the U.S. government to expand broadband access across the country. Senator Al Gore helped pass the High Performance Computing Act in the early 1990s, which provided a 600 million USD fund to build internet infrastructure in the U.S.

A resulting body called the National Information Infrastructure was required by law to ensure internet access to members of the U.S. public without prejudice or discrimination. All U.S. classrooms were to be connected to the internet by the year 2000, and federal agencies had to build websites and put information online so that it could be accessed by the public at will.

Some of the funding under the High Performance Computing Act went to the team at the National Center for Supercomputing Applications (NCSA) at the University of Illinois at Urbana-Champaign. They came up with Mosaic, the world’s first mass-market web browser, which helped propel the internet from a tiny niche community to widespread public use.

Marc Andreessen, one of the programmers at NCSA, later quit his role to establish Netscape Communications. There he oversaw the development of Netscape Navigator in 1994, a significant upgrade to Mosaic. Netscape Navigator allowed rich images to be displayed on screens as they downloaded, as opposed to Mosaic’s practice of displaying the image only after the web page had loaded completely. This significantly enhanced the web experience, leading to hundreds of thousands of downloads.

The mid-1990s was the golden age of the internet, marked by the rapid proliferation of high-speed broadband internet, the emergence of feature-rich web browsers, the first hint of future consumer activity such as online shopping and live streaming, as well as the growth of the internet as a modern-day research tool replacing traditional encyclopedias.

Heavyweights such as Apple and Microsoft had begun to dominate the personal computer wars and Microsoft, in particular, wanted a piece of the browser pie. Its decision to bundle Internet Explorer with its Windows operating system was a move to directly challenge the dominance of Netscape Navigator. Microsoft also leaned on its OEM vendor relationships and a 150 million USD investment in Apple to ensure that internet adopters everywhere got a taste of Internet Explorer first. That later led to an antitrust lawsuit, but Netscape’s market position had dwindled by then, especially after its acquisition by AOL.

Web communities were thriving in the mid to late 1990s. While the internet had largely been a way to serve science and research needs, ordinary users found that they could use the internet for things like sports, music, entertainment, news, and discussion forums. This, coupled with the proliferation of broadband internet, greatly transformed the overall web experience.

Javascript, a core web technology powering the development of feature-rich sites and apps, was also engineered in 1995 by Brendan Eich, who worked at Netscape Communications at the time. The development of the language was largely to counter Microsoft’s looming threat by building a platform that Bill Gates couldn’t have access to. In time, however, Javascript became an important language in its own right.

Javascript helped make the web immersive. Developers used it to embed videos, plugins, and other features directly into a website’s source code. This helped speed up load times, catering to the relatively lower bandwidth availability during those early days.

Other languages such as Java and PHP also entered the mainstream, allowing for things like content management networks, web templates, and further customizations.

Jeff Bezos launched Amazon in 1994, with an initial focus on selling books. Bezos, a highly-paid analyst at a quantitative hedge fund, quit his job at Wall Street to start the company. Helped with initial seed capital of 250,000 USD, Bezos gambled correctly that the internet would soon dominate U.S. households, and that he had to move quickly if he wanted to be one of the first to capitalize on this trend.

“I came across the startling statistic that the web was growing at a rate of 2,300% a year, so I decided to come up with a business plan that would make sense in the context of that growth,” he said in one of his first interviews.

A famous memo penned by Bill Gates in 1995 referred to the internet as the “coming tidal wave,” reflecting the thoughts of most tech leaders about what lay ahead.

“The Internet is the most important single development to come along since the IBM PC was introduced in 1981. It is even more important than the arrival of the graphical user interface (GUI),” wrote Gates. “I think that virtually every PC will be used to connect to the Internet and that the Internet will help keep PC purchasing very healthy for many years to come.”

Growth of online communities and social media

One of the internet’s most revolutionary aspects has been the creation of online communities, unencumbered by national borders. The first step in this direction came in the form of Usenet in 1979. Usenet, short for Users Network, was conceptualized by graduate researchers Tom Truscott and Jim Ellis of Duke University. Based on UNIX architecture, Usenet got its start through three networked computers physically located at UNC, Duke, and Duke Medical School.

While the early versions of Usenet were slow and cumbersome to manage, the technology whetted public appetites for a virtually unlimited amount of information and knowledge. Usenet helped spawn online communities and discussion forums.

Delphi Forums, one of the first forum sites on the internet and still in existence today, started in 1983. Others like Japan’s 2channel are equally popular. But the real impetus for the growth of online communities came as the internet proliferated throughout the world.

Six Degrees, the world’s first social media site, launched in 1997. It allowed users to sign up with email addresses and “friend” one another, and had 1 million members at its peak.

Well-known web hosting service Geocities, originally called Beverly Hills Internet, also started in 1995. It allowed users to build their own fluid webpages and categorize them according to interest groups.

Internet Relay Chat helped bring chat rooms to life, with mIRC a popular client for Windows. Instant messaging apps such as AOL Instant Messenger and Windows Messenger followed this trend.

ICQ, one of the most popular standalone instant messaging apps, which boasted 100 million users at its peak, started in 1996.

And early precursors to social media sites, such as Orkut, Friendster, and MySpace, were all introduced in the 2000s. They enjoyed a wave of success until Facebook, which launched in 2004, started to spread its wings.

The rest, as they say, is history.

The start of internet censorship

The first hints of internet censorship came in the form of The Communications Decency Act of 1996, introduced by the U.S. government. It was brought about in response to vast amounts of pornographic material uploaded on the web, with petitioners arguing that it violated decency provisions and might end up exposing minors to such content.

By this time, advocacy bodies such as the Electronic Frontier Foundation (EFF) had also set up shop, campaigning for the neutrality of the internet as envisioned by Tim Berners-Lee. John Perry Barlow, the CEO of the Electronic Frontier Foundation, wrote an impassioned appeal to keep the spirit of net neutrality alive and warn against any attempts to filter content.

The Digital Millennium Copyright Act was passed in 1998, criminalizing the production and distribution of any form of technology that could assist in circumventing measures that guarded copyright mechanisms.

The act may have been well-intentioned, but it’s received its fair share of criticism over vague and misleading terminology. The EFF in particular says that the DMCA chills free expression and stifles scientific research, jeopardizes fair use, impedes competition and innovation, and interferes with computer-intrusion laws.

Censorship wasn’t restricted to the U.S.. The “Great Firewall of China” was launched in 1998 to filter the kind of content available to the then very few Chinese users.

Internet censorship has become more invasive and more complex over time. The decentralized nature of the web gives netizens the right to express their opinions freely online, spawning a range of discourse and special interest groups.

Unsurprisingly, the internet isn’t a medium favored by repressive and authoritarian governments. They can’t manipulate it outright, so they must take steps to restrict access.

Web 2.0 helps drive further adoption

In 1996, there were approximately 45 million global internet users. This figure swelled to over 1 billion users by 2006, assisted by the emergence of the internet as a sharing and collaborative platform rather than one geared toward techies and engineers.

This shift, accelerated by the dot-com crash of 2000, transformed websites and web applications from static to dynamic HTML. High-speed internet connections had become the norm, allowing for feature-rich sites and apps. The emergence of sites such as Facebook, Twitter, WordPress, BitTorrent, Flickr, Napster, and more resulted in a flood of user-generated content.

Ordinary people, despite not being able to write code, could still publish blogs, start their own websites, open social media accounts, and build portfolios. The term “software as a service” started to emerge; native applications could be accessed directly from the web, sidestepping the need to buy CDs and download software on personal computers.

Google, which started in 1998, became closely associated with the Web 2.0 phenomenon. It built its entire software stack on the premise that users wanted convenience and ease of use. Starting from search, it spread its wings into email, location services, reviews, maps, and file sharing entirely on the internet. High-speed internet was the catalyst, but Sergey Brin and Larry Page were nimble enough to predict where the market was headed.

The introduction of smartphones and 3G internet

Internet accessibility on personal computers helped bring the technology to the masses, but mobile broadband networks have had a big role to play too.

The launch of 2G networks that kickstarted commercially in Finland in 1991 helped mobile users benefit from enhanced voice communication as well as feature-rich SMS. Data services for mobile became popular with the adoption of the EDGE network, the launch of which also coincided with Web 2.0.

But what really kickstarted the mobile-device revolution was the introduction of cheap smartphones, associated app stores, and 3G connectivity.

Smartphone tech first piqued public interest with the growth of BlackBerry devices manufactured by Canadian company Research in Motion. At its peak, BlackBerry claimed 85 million subscribers. The phones could connect to Wi-Fi, allowed for real-time chat through the BlackBerry Messenger platform, and made a modest attempt to bring in vetted third-party apps.

3G networks, first introduced by Japanese provider NTT DoCoMo in 2001, helped bring standardization to mobile network protocols. Just as TCP/IP had allowed for interoperability among disparate networks attempting to connect to the internet, 3G was, for the first time, a global network of mobile data standards. Not only was it four times faster than 2G, it also allowed for things like international roaming, video conferencing, voice over IP, and video streaming.

BlackBerry benefited from the global rollout of 3G. It became the phone of choice for high-flying executives and business users as they could check their email on the fly and stay connected regardless of where they were in the world.

However, BlackBerry failed to evolve as smartphones made the leap from phones with internet access to pocket computers with phone access. The arrival of iOS and Android and their respective application stores were a death knell for the company.

Cheaper devices bring the developing world online

Apple officially launched the iPhone in 2007, about a year ahead of Android, but it’s Google’s OS that has dominated the smartphone wars since.

Apple makes billions of dollars from its range of iPhones and corresponding sales on the App Store, but Google’s decision to offer Android to OEMs meant that it benefited from network effects and put the onus on hardware manufacturers to grow the popularity of their devices.

The first Android smartphone, the T-Mobile G1, went on sale in October 2008. Built by HTC, the phone featured a QWERTY style keyboard akin to Blackberry devices. However, that was one of just a handful of Android phones that had this keyboard arrangement, with the popularity of touchscreen phones taking off.

Android phones, which can retail for as low as $50 USD, currently account for 86% of global smartphone market share. This affordability, coupled with the near-universal adoption of 3G and 4G networks helped bring vast swaths of the developing world online.

While internet growth in Western countries such as the U.S., Canada, and the UK was predicated on the mass rollout of high-speed fiber and broadband networks, in developing countries, mobile networks were cheaper to set up, with rapidly decreasing costs for data services.

Plus, not everyone could afford to fork out 500 USD for a laptop or desktop. Inexpensive smartphones helped bridge the gap, allowing users to do the same things on their device as they would on a laptop, such as browse social media, watch YouTube videos, play games, make video calls, and read news.

Both the iOS and Android platforms began to offer versions of their OS in local languages too, making them even more marketable.

In 2016, global mobile internet use overtook desktops for the first time confirming the ubiquity of smartphones and how they had essentially helped much of the global population leapfrog into the internet age.

What’s the future of the internet?

As of April 2020, there were over 4.5 billion internet users across the world, representing a penetration rate of approximately 59%.

That’s massive growth when you consider that we’ve gone from a handful of users in 1990 to billions today, but there’s still work to be done to bring the internet to all parts of the globe. With over 40% of the global population offline, the coming decades will focus on expanding internet access to far-flung areas using satellites and internet-connected balloons.

The U.N. declared the internet to be a basic human right in 2016, compelling governments to invest in mobile and fixed broadband infrastructure and making it a key part of the Sustainable Development Goals (SDG).

Countries with high internet penetration are likely to witness further proliferation of IoT devices and smart gizmos. The launch of 5G, while riddled with controversy, is likely to accelerate in the coming months and years.

Such ultra-high-speed networks are likely to create thousands of new jobs and enable widespread use of smart and internet-connected devices. It’s not hard to imagine a future where subway cars would alert waiting users about current capacity, vehicles avoiding accidents by “talking” to one another, as well as dystopian scenarios such as facial-recognition cameras tracking our moves and relaying them back to a centralized database.

The W3C consortium, founded by Tim Berners-Lee, is already working on a new concept that it calls the “Semantic Web.” With this, it hopes to build a technology stack to allow machines to access an “internet of data” or a database of all the data in the world. The end goal, according to Berners-Lee himself, is to see a future where “the day-to-day mechanisms of trade, bureaucracy, and our daily lives will be handled by machines talking to machines.”

The internet has come a long way—but in the larger scheme of things, it’s only just begun.

Take the first step to protect yourself online. Try ExpressVPN risk-free.

Get ExpressVPN